We keep asking what AI systems can do.

That is not the interesting question .

The interesting question is what they can be made to do.

That shift may sound subtle, but it’s not. It is the difference between capability and consequence. Between architecture diagrams and behaviour. Between “this looks risky” and “this actually works”.

This is the difference between a system that contains risk and a system that can demonstrably execute it.

And once you see that difference, you can’t unsee it.

That is where ISeeMP came from.

The problem with inventory thinking

Most of the conversation around MCP-style systems, agent tooling, and model integrations still sits at the capability layer.

- This tool can read files

- This one can query a database

- That one can send HTTP requests

- The model can reason over context

Individually, none of those are inherently catastrophic.

Together?

That is where things get interesting.

Once you connect them, the question stops being:

“What can this tool do?”

…and becomes:

“What can this system achieve?”

That is a very different class of question. It is also a much more useful one from a security perspective. Because systems are rarely compromised by a single capability. They are compromised by composition .

The lethal trifecta

Simon Willison captured this perfectly in his post on the lethal trifecta.

A system becomes meaningfully exploitable when three things exist:

- Access to sensitive or private data

- Influence over model behaviour, through instructions or untrusted content

- A way to send or mutate data externally

In ISeeMP terms, that often looks like:

READ_SECRET_HIGHMODEL_CONTEXTSEND_EXTERNAL

Individually, these are just capabilities, but combined, they are a path.

And that path is what matters.

Possible is not confirmed

Here is where things usually go wrong.

Most tools stop at:

“This looks like a lethal trifecta”

Which is useful, but incomplete.

Because there are at least four meaningful states:

- Capability present

- Partial path

- Complete path, structurally possible

- Confirmed path, actually executable

That last one is the one people rarely prove. And it matters, because a system that could exfiltrate data is not the same as a system that does.

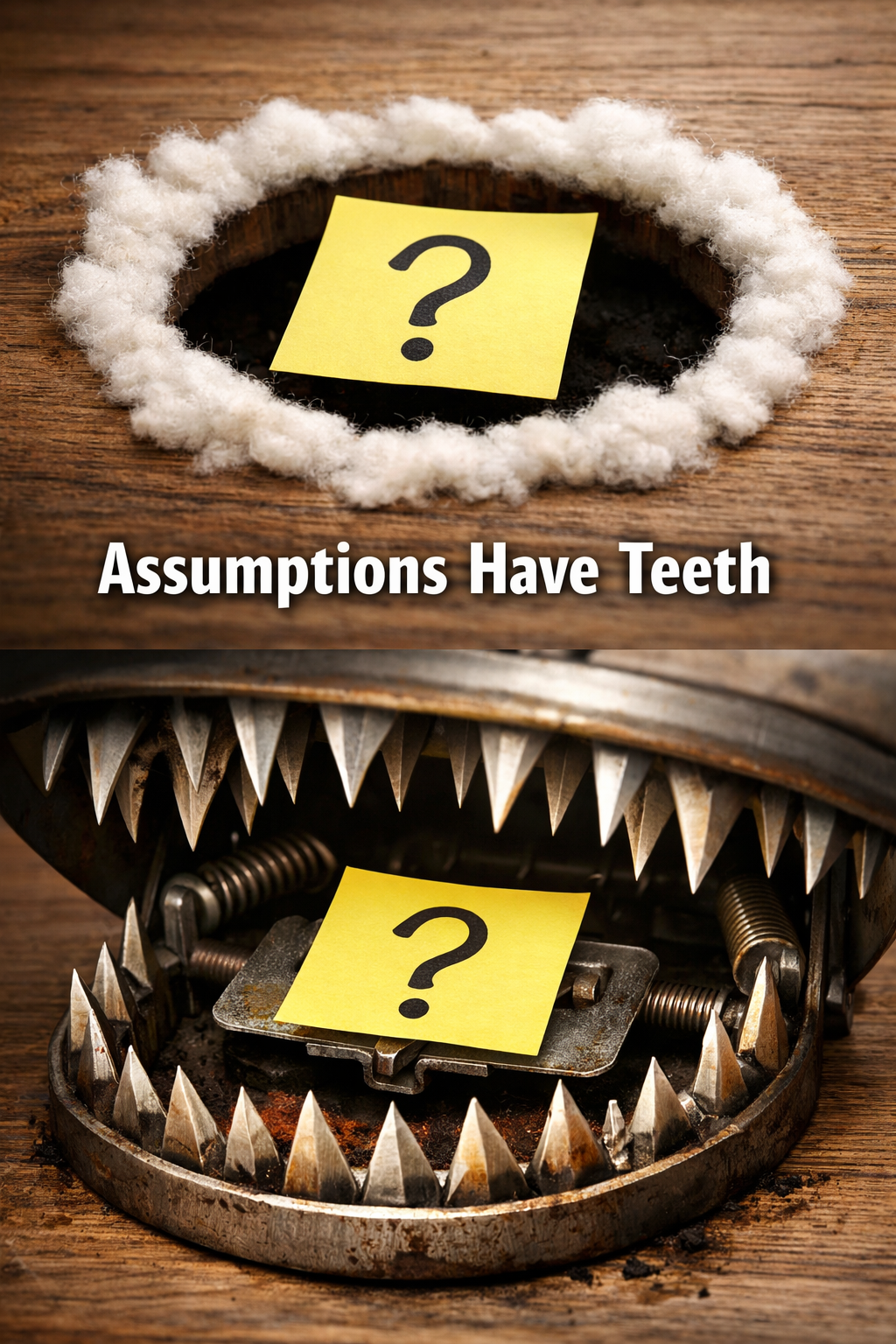

That gap is where assumptions live. And assumptions have teeth .

From paths to proof

ISeeMP started as a way to map those paths, but it quickly became obvious that mapping was not enough.

So it does two things.

Static analysis: what could happen

- Discover MCP servers

- Classify tool capabilities

- Build a graph of possible paths

- Identify lethal trifecta conditions

This gives you structure.

Deterministic testing: what actually happens

- Execute controlled test profiles

- Inject canaries

- Trace execution across tools

- Verify whether data actually reaches a sink

This gives you truth, and that distinction matters more than anything else in the system. Because models are very good at sounding confident about things they have not proven.

What this looks like in practice

Graph: structure

Highlighted graph: path focus

Findings: risk explained

Expanded findings: evidence context

Logs: behaviour proven

Logs linked to a finding: the trail of evidence

The moment it clicked

The turning point was not a clever algorithm. It was a failure. I had a clean UI. A nice graph. Neatly grouped findings. Everything looked right.

And it showed nothing - no exploitable paths.

Which was… unlikely.

The problem was not the UI. The problem was not the model. The problem was not even the classification.

The problem was that nothing had actually been tested.

The system was faithfully representing what it knew.

It just didn’t know whether anything worked.

That is when the validation layer stopped being “nice to have” and became the core of the system.

Canaries beat vibes

There is a simple way to test whether a path is real.

Don’t infer it, execute it.

ISeeMP uses deterministic canaries for this.

- Generate a unique marker

- Push it through a potential path

- Check whether it arrives at the sink

If it does:

confirmed

If it does not:

rejected or inconclusive

No guesswork. No interpretation. No “the model probably did this”. Either the canary shows up, or it doesn’t.

That is the difference between analysis and storytelling .

Secure vs vulnerable: the important contrast

One of the more interesting things that falls out of this approach is that you can demonstrate both risk and control effectiveness.

Same pattern, same model, same conceptual path.

Different control plane.

Secure setup

- Path exists

- Model sees content

- Sink is blocked, for example by SSRF protections

Result:

TRIFECTA_COMPLETE- but not confirmed

- no canary observed

Vulnerable setup

- Same path

- No sink restrictions

- Data allowed to flow

Result:

TESTED_CONFIRMED- canary observed

- lethal trifecta confirmed

The difference is not the model.

It is the system design.

That is the whole point.

Enter DV-MCP

To make this concrete, I added a deliberately vulnerable local fixture:

DV-MCP: Damn Vulnerable MCP

It is:

- local only

- synthetic

- deterministic

- explicitly unsafe

It exists for one reason:

to prove a full lethal trifecta end-to-end

Not in theory, in execution.

It uses fake secrets. Controlled sinks. Known canaries. No real-world targets. No uncontrolled exfiltration. And that matters, because the goal is not to build an exploit kit.

The goal is to build a proof system.

What ISeeMP actually gives you

At this point, it is no longer just a scanner.

It is a system with three lenses.

Graph

- shows structure

- highlights paths

- answers “how could this flow?”

Findings

- summarises risk

- groups by trifecta stage

- answers “why does this matter?”

Logs and Evidence

- shows execution

- records canary movement

- answers “what actually happened?”

Put together, they answer:

what exists

what matters

what is real

That is a much more useful workflow than any one of those in isolation.

Composability has teeth

The deeper I got into this, the more it reinforced something I already knew from cloud security:

The dangerous part is not the component.

It is the boundary.

A read tool is fine.

A send tool is fine.

A model is fine.

Combine them incorrectly, and you have built a data exfiltration pipeline with excellent documentation and a friendly UI.

Which is… not ideal .

This is not really about MCP

MCP just makes this easier to see.

The pattern applies much more broadly:

- SaaS integrations

- automation pipelines

- agent frameworks

- identity-driven workflows

Anywhere you have:

- data

- transformation

- movement

…you have paths.

And those paths are where risk lives.

The uncomfortable conclusion

The industry has spent a lot of time asking:

“What can this AI do?”

Which is a capability question.

The better question is:

“What can this system be made to do under the wrong conditions?”

That is a behaviour question.

And behaviour is what you need to test.

Not infer, not assume - TEST.

So what?

ISeeMP is still early.

It is local-first. Deterministic. Slightly opinionated. Occasionally sarcastic.

But it does something I think is genuinely useful:

- It makes execution paths visible.

- It makes risk compositional.

- And it makes exploitability testable.

Not by vibes or inference, but by proof.

I didn’t set out to build “an AI security tool”.

I was trying to answer a simpler question:

If I connect these things together, what actually happens?

Turns out, that question is doing a lot more work than it looks like.

And once you start answering it properly, a lot of comfortable assumptions stop surviving contact with reality .