Image by Chris Yates on Unsplash

In my last post, Grav in Azure part 6 - Optimising Grav for performance and security, I demonstrated how to configure Grav to optimise the performance and security of your grav website.

So now that we have a responsive, resilient and secure blog website, running on Azure, front-ended by Cloudflare, we’re done right?

“Chance favors the prepared mind” - Louis Pasteur

What you will need

- A grav website, hosted on a Windows Web App in Azure.

As anyone who as ever worked in IT will tell you - we’re still not done! It’s all well and good having a service, and some resilience (bear in mind here we have no resilience on the azure back-end - and for anything mission critical you would absolutely want to have that and not rely solely on Cloudflare on the front-end) such that you can handle a number of failure scenarios and still have a service - but what happens when the worst happens?

If you don’t have a backup you can restore services from and that you trust can be restored from, and quickly, then you don’t have a reliable service at all.

Backup Options

In exploring options for backup, I started with what is available in Azure itself (as you’d expect as the back-end infrastructure resides there).

- Web Apps Backup

Azure Web Apps do have backup capabilities (of which you can [read more here](https://go.microsoft.com/fwlink/?linkid=847966)) but as we're running on a Free (F1) instance for testing and a Dev/Test (D1) instance for the live site, we can't use this feature as it's not available on a shared instance - you must be using at least a Standard instance:

- Web Jobs

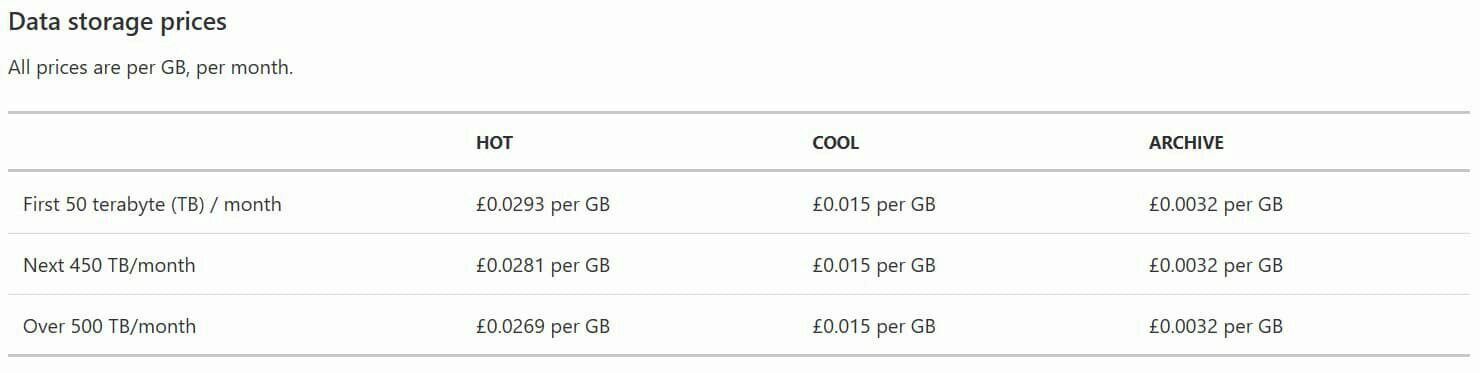

I then considered creating a Web Job which could run on a schedule to backup the site content to Azure Blob storage (which is pretty cheap for Gen2 storage, even with increased resilience such as using GRS ([Globally Redundant Storage](https://azure.microsoft.com/en-gb/pricing/details/storage/)))

e.g. for Gen2 Blob Storage, you're looking at around 3p (yes, 3 pence) per GB stored per month in West Europe (pricing varies by region), for your first 50 TB.

Now for a blog, you're not going to need anything like that much storage. My current site uses around 230MB (of a 1GB quota permitted in a D1 Web App). So 3p per month would store the live site and 3 backups of it at current size (obviously growth is a factor). Even if you want nightly backups, you're looking at 8GB per month or 24p.

You don't only pay for the storage space itself - you pay for your usage and operations e.g. putting data in, reading it, deleting it etc. But again you're talking small beer here - 8p per **10,000** *Writes*, 8p per **10,000** *List and Create Container Operations*, a third of a penny per **10,000** *Read Operations* - you get the idea:

You've also got to consider that if you want more than LRS (locally redundant storage), you'll pay for the replication bandwidth - again in West Europe, that's around 1.5p per GB of replication traffic - so even with under 8GB of backups per month on my site as it is currently sized, that's 12p!

All in all, I could backup my site each night for as little as £0.39 per month.

Decisions, decisions

*“I must have a prodigious amount of mind; it takes me as much as a week, sometimes, to make it up!” ― Mark Twain *

So you’d assume this is the route I’ve gone…

However, again because I’m using F1 and D1 Web App instances, in an effort to see how minimal I can make this from a cost perspective, I can’t have a Web Job that runs either continuously or on a schedule (perhaps to make me a good neighbour to those sharing the infrastructure with me, since these are not dedicated instances just for me, or if you’re cynical, to encourage me to move up a tier or two!).

Which is a shame as I had the beginnings of a Web Job that would invoke Grav’s own backup command to take a backup of the entire site, and then move the backup offsite as it were, to more resilient storage (the plan was to move it to Azure blob storage and remove backup file from the site itself).

I do however plan to revisit this - as it’s possible to customise your Kudu deployment script that runs when you commit and push to your repo, triggering the webhook and deploying your new code to your site. So a likely scenario here is to have the custom script trigger a backup when you push new code (or perhaps only if you commit a particular trigger file).

Which leads me to…

3. Grav Git-Sync plugin

As it turns out, those nice people at Grav have produced a plugin for grav which works as a two-way sync between your Grav website and your git repository. You already have one way sync by connecting your web app to your repo - if you push a change to the repo, Azure knows via a webhook and automatically deploys your site again using the latest code. Git-sync will do this for you if you don’t already have it, but what it also adds is a reverse sync.

You might wonder why this would work as a backup - well for one thing, Grav advise you to only amend files in the user/ directory, and nothing in system/ etc.

Even if you hold true to this advice, you will have logs etc which are on the web app and not in the repo, so no backup of logs etc.

So, with Git Sync, if you make a change direct on the back end, whether automatic such as log file creaton, or manual, it is committed to your repo automatically.

It also means you can chose to create/edit your blog content via the Admin plugin and have it automatically backed up. I prefer to write my content using one of two workflows:

- Laptop: I write all my content in Visual Studio Code using the Markdown syntax, and use the inbuilt git functionality to add/commit/push up to my repo, and then Azure automatically deploys courtesy of the aforementioned webhook.

- Mobile: I use the Markdown app on iOS to write my posts in Markdown syntax, then I use Git2Go to do the git work (create the folder for the blog post, add the markdown file, any images etc).

But you may like to use a nice web gui through an admin console to write your content in the browser and if you do so, anything you publish in this way will exist only in your web app in Azure and not be backed up anywhere (unless using a higher tier of Web App and using the Backups functionality).

I’m currently testing Git-Sync on a non-production branch of my repository against a staging web app and it looks promising, but before I run on my live site, I will obviously do more testing and then I will blog about it.

One thing to be aware of though (more details in the future blog post) - the default path for the git executable that the plugin looks for is simply git. But for an azure web app (a windows one, running IIS), you need the path to be "D:\Program Files\Git\bin\git.exe" - the quotes are important, you need to quote/escape the entire path.

As a future development I may also do what I envisaged in option 2 above and customise my deployment script so I can backup to Azure blob storage. The main reason, apart from making my solution more Azure-centric, is that I don’t know what Bitbucket’s Business Continuity/Disaster Recovery processes are like - if they have an issue, and their resilience and backups fail, the only place my site content and code resides is inside the Azure web app, and perhaps locally on my laptop and mobile phone. That might be fine for a personal blog, but I’d never recommend that for anything even remotely mission critical!

As ever, thanks for reading and feel free to leave comments below.

In the next post in this series, I’ll take a look at backup options, and areas that may benefit from further automation.