Image by John Salvino on Unsplash

As you may have guessed from my last post, I’ve been quite impressed with the serverless offerings in Azure of late - as a lifelong infrastructure person, the idea of being able to throw together functional code that you can access from anywhere, without first standing up infrastructure is still a little new and surreal to me - but an experience I’m enjoying nonetheless.

I recently seen a tweet that likened Azure Functions and Azure Logic Apps as being the glue of Azure and increasingly I can’t help but share that view.

With that in mind, I thought I’d share another piece of azure glue that you might find useful - Function Proxies

Background

I’m currently in the process of developing a replacement for my current home security camera automation workflow and am migrating from the following setup:

- Motorola wifi-connected security cameras

- Raspberry Pi Model 3B + (which I use for more than just security cameras)

- Motion Project (an excellent open-source motion detection application for Unix/Linux based systems)

- Jeremy Blythe’s Google Drive Uploader python script - to upload motion video clips to Google Drive

- mpack - to email motion video snapshot images to an IFTTT email trigger - which sends a mobile notification to the IFTTT app on our iphones.

Why change from the current setup?

- I wanted to try to do it all in Azure - as this was all setup over Christmas/New Year 2017/2018, before I started exploring Azure

- I was beginning to run out of storage space in Google Drive, and reckoned could get more storage for my money in Azure than just buying more storage from Google

- I was finding that it was taking around 2 minutes from motion being detected to receiving the mobile notification - a delay I believed to be partly down to gmail and partly down to IFTTT’s email trigger.

- Google Drive doesn’t have automated housekeeping, so I either have to keep tidying uploaded files myself, or automate it

So, I’ll blog about parts of the new workflow as I complete them or they are in a good enough proof-of-concept state to be worth blogging about.

Progress to-date

For now, I’ve setup the following:

- Written a new Python script to upload images/video clips to Azure blob storage

- Written a simple javascript page, hosted in the storage account (but not as a Static Website) that will retrieve and display the list of video blobs, with an HTML5 video player. It needs more work, but proves the concept well enough.

Requirements

Now, it goes without saying that I want this to be no less secure than the current solution - more so if possible.

So, I obviously don’t want my storage account or container/blobs to be available for anyone to access - I only want those who should have access (my wife and I) to be able to view them.

But I’m also storing static web content with client-side scripting in the same account/container - so how do I make that accessible but also secure?

The solution

In researching Azure storage security I came across Shared Access Signatures (SAS) (more on those in a future post) but while that might work for the webpage to access the blobs, I still wanted to secure the front-door and not have the entire storage account or container to be setup with Anonymous access.

The solution? Function apps - specifically the Azure Functions Proxies capability.

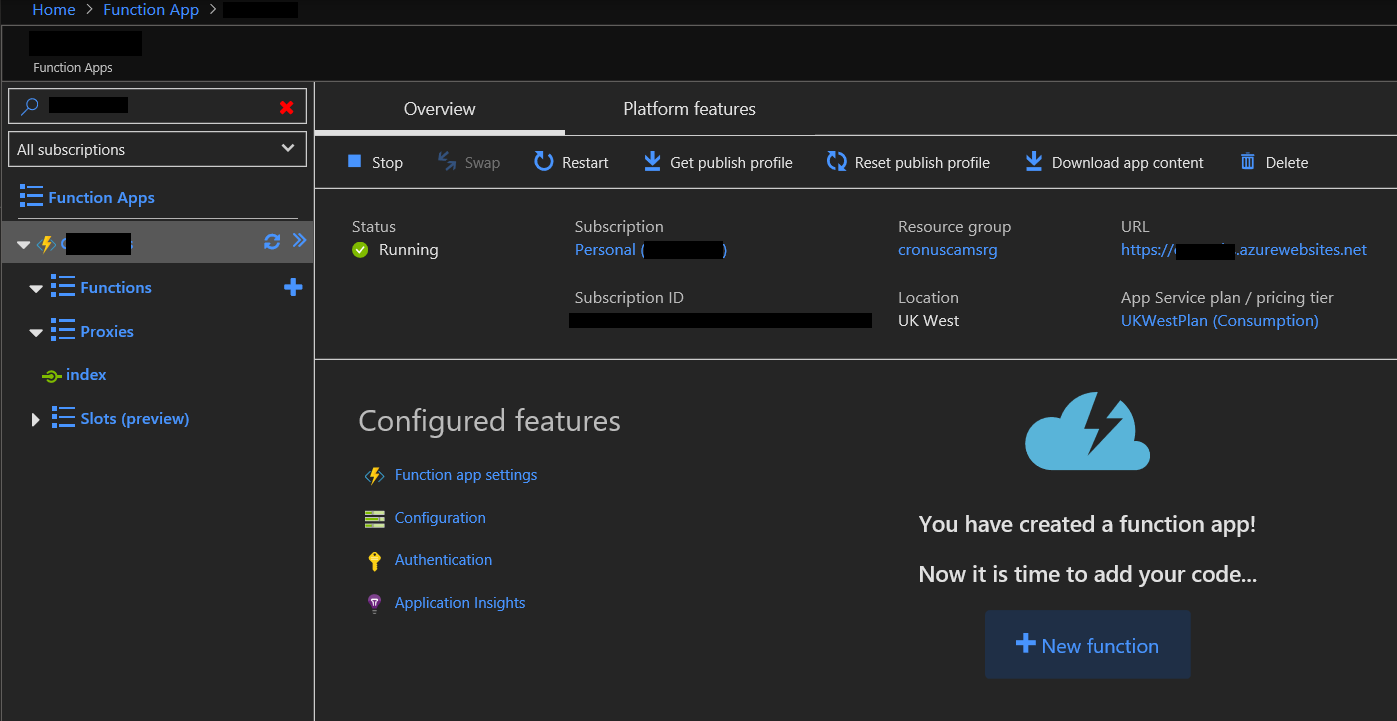

I setup a new function app (see the URL above for a quickstart guide to creating one).

Creating a function proxy (a guide)

In my case, I’ve set mines up as follows (as viewed from the Azure Portal):

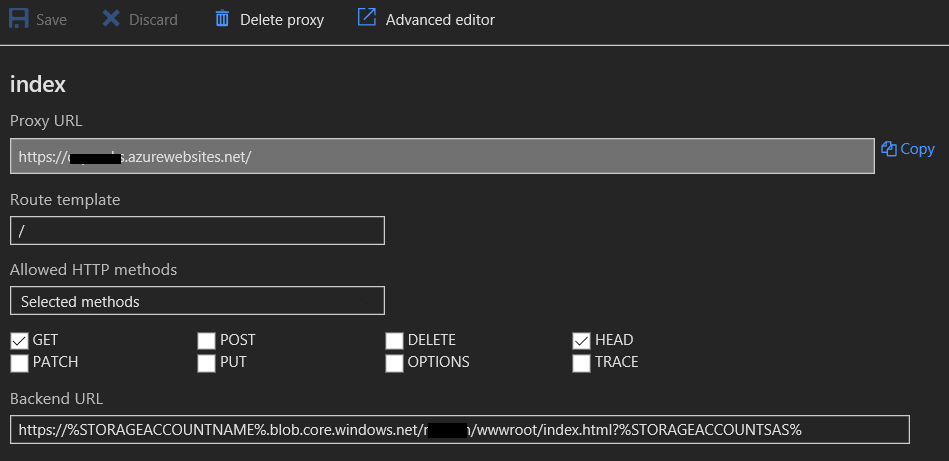

Clicking on Proxies to expand it you will see I have a proxy called index:

In here we are looking for requests hitting the root URL for this Function app (which uses the azurewebsites.net domain) using HTTP GET and redirecting to the back-end URL for the index page in my storage account.

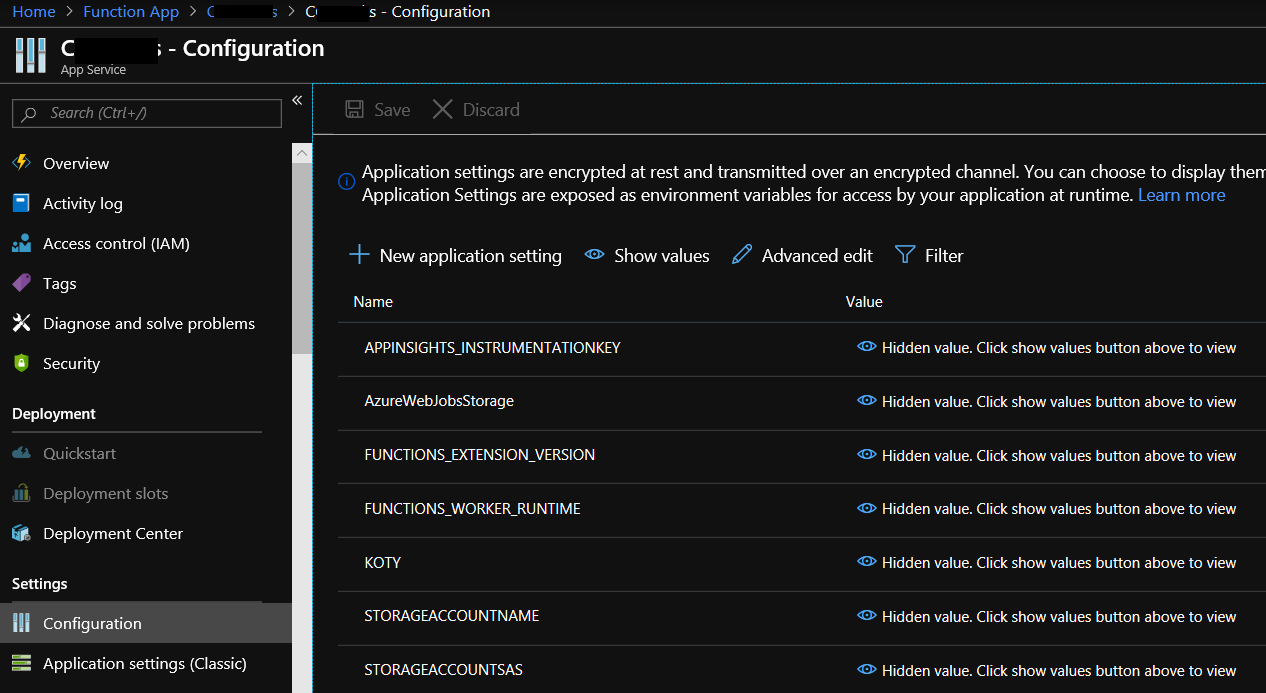

You’ll see that I also use some application settings in the app to store the storage account and SAS token - I intend to change this down the line so that it generates it’s own SAS token using a powershell function, but for now, it’s stored in the application as follows:

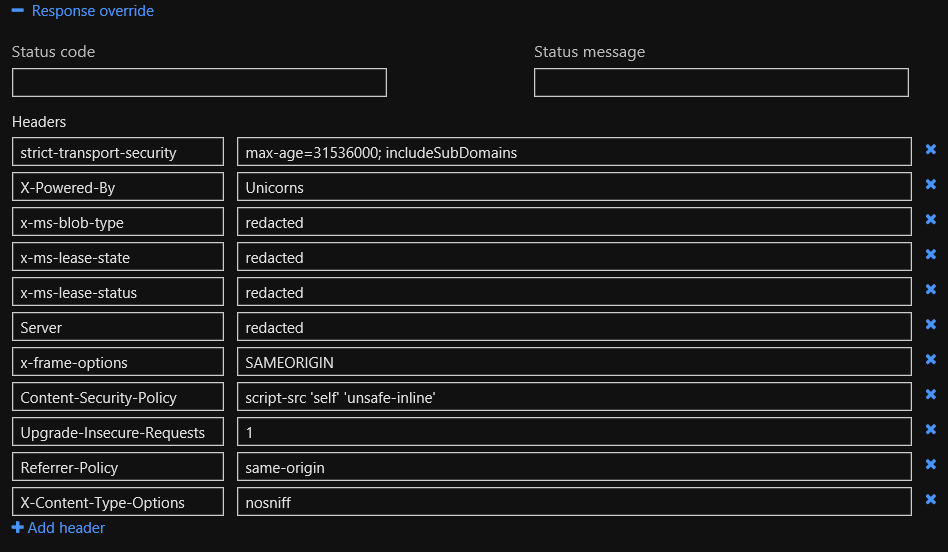

This then allows me to setup Response overrides so that I can configure response headers that increase security significantly:

Securing your proxy with authentication

Now you may be saying that’s all well and good, but anyone can just go to the app url if they find it out, and then go view my security cam clips - so what was the point?

Well, another advantage of using a Function Proxy as the front-end is that I can add authentication.

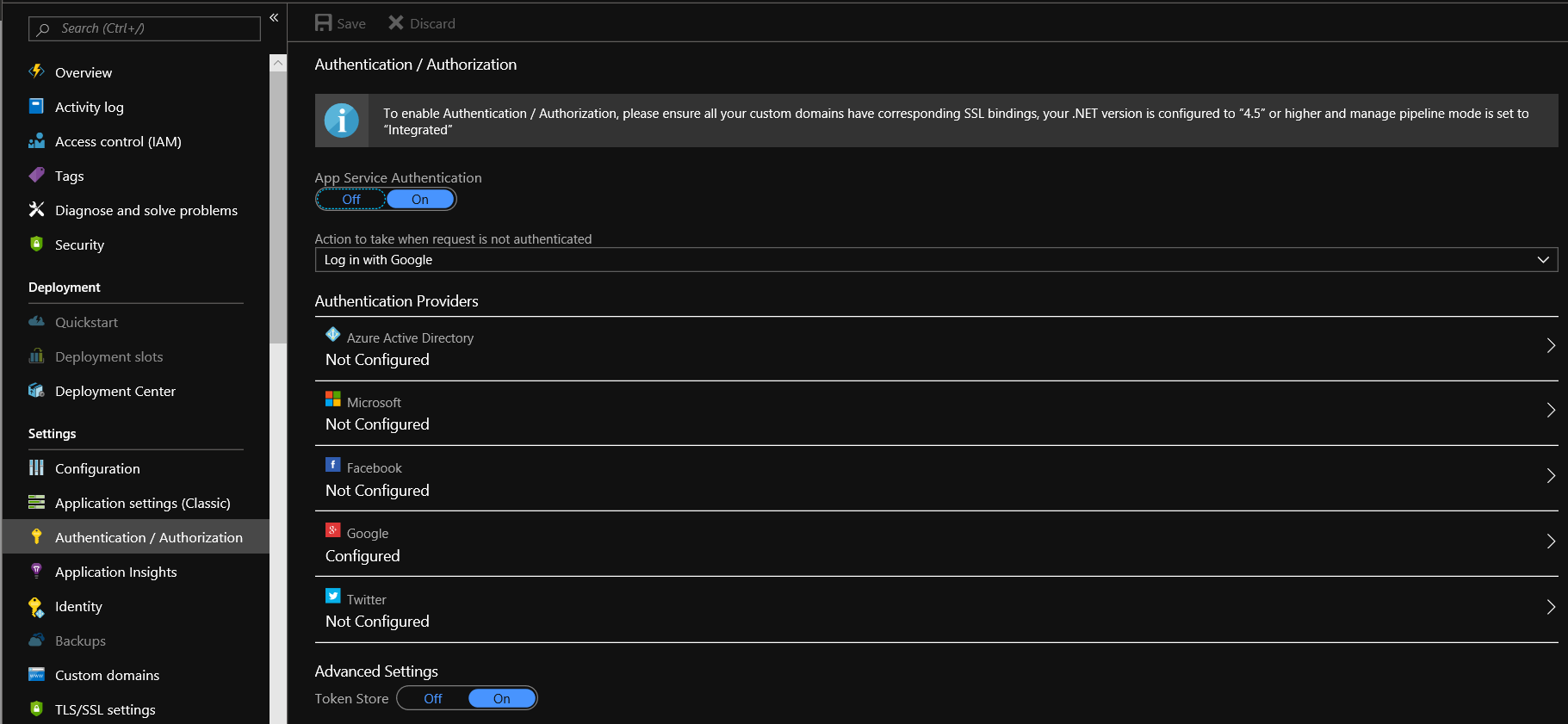

In the app settings, go to Authentication/Authorization then:

- Click on On to enable App Service Authentication

- Choose your authentication provider (in my case, I have chosen Google since I already have a google account setup for my existing workflow)

- Follow the appropriate guide to setup the authentication provider chosen (more on this in a moment)

- Be sure to set Action to take when request is not authenticated to login with the provider chosen - or a backup provider, if you prefer.

- Click on Save

It should look similar to below:

As mentioned above, there are guides for each provider on how to setup the app service authentication.

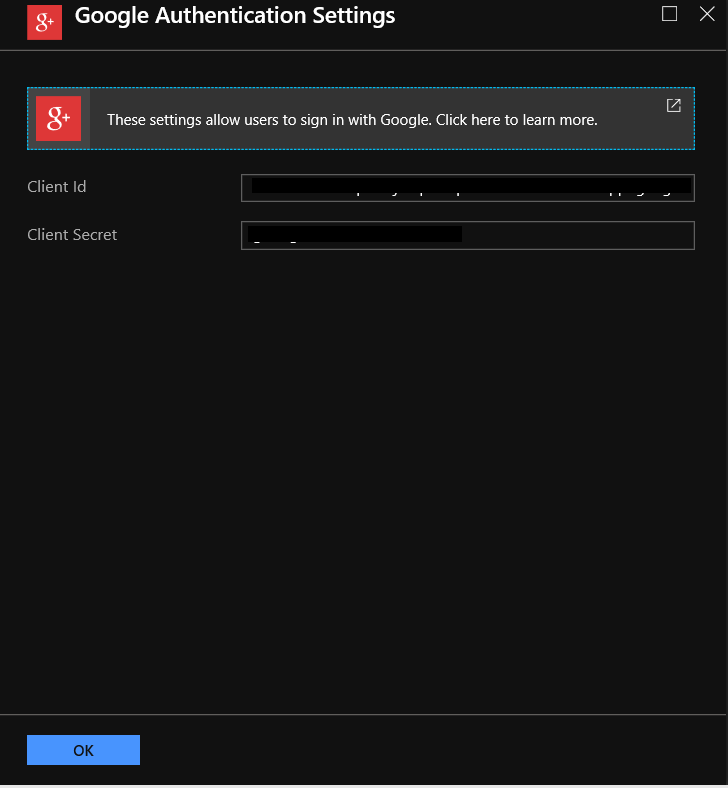

In my case, as I went with google, once you click on the google provider in the list, you will see a blade like below:

On that blade is a link to a guide - click anywhere in the box which includes the text Click here to learn more to go to the guide - which for google is here.

When you complete the steps in the guide, you’ll have a Client Id and Client Secret to add into the blade and click on OK

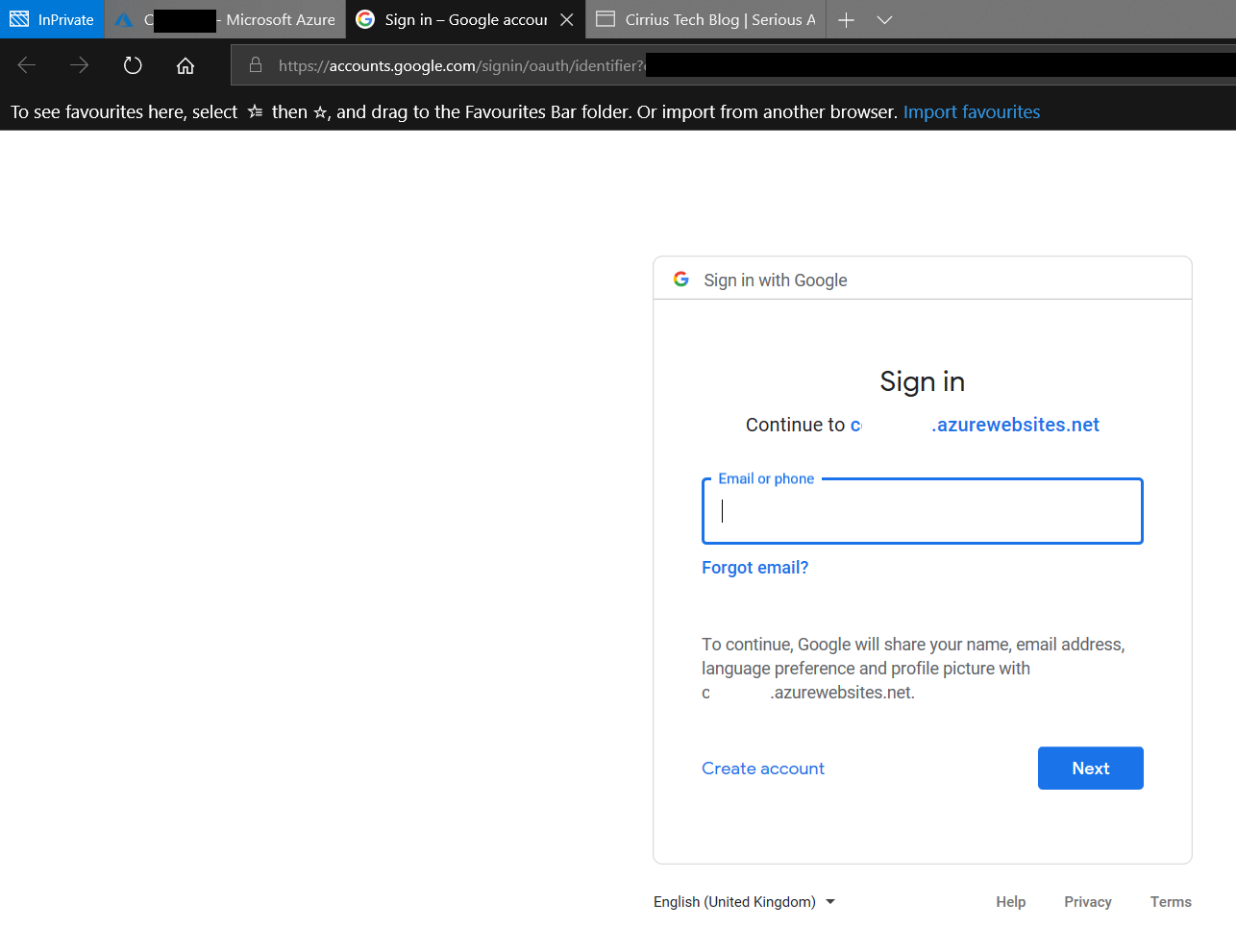

Now, if I try to access the webpage using the storage account url, I get denied because the container access policy is set to Private (as it should be) - but when I go via the Function App url, I get prompted for authentication by google:

Only if I pass the google auth challenge do I get access to the webpage blob in the cintainer.

Summary

So now I have a secure web front-end for a webpage hosted in a storage account, all for pennies (in fact, I’ve yet to incur any cost whatsover for requests hitting this proxy and given our likely usage profile, am unlikely to).

Next time you want to secure external access to a resource you’ve built in Azure, if you don’t see a good fit for requirements, you might consider an Azure Functions Proxy!

As ever, thanks for reading and feel free to leave comments below.