I don’t write code. I sculpt it .

That line, borrowed from Mark Russinovich and then accidentally absorbed into my own operating system, has always felt more accurate than “I develop software”. Good engineering is rarely linear. You do not sit down with perfect requirements, produce perfect architecture, then calmly type a perfect system into existence while orchestral music swells in the background and your coffee remains at a drinkable temperature.

You shape things. You chip away. You remove what does not belong. You step back, squint a bit, mutter something unpublishable, then realise the thing you built has finally started to tell you what it wants to become .

That works beautifully when you are the one holding the chisel.

It gets strange when the chisel is an AI agent that remembers every questionable compromise, every half-fix, every discarded direction, every temporary assumption, and then politely tries to incorporate all of it into the next version as if your mess was a set of sacred architectural principles .

That is where my thinking on agentic development shifted. Not because one model suddenly became magical. Not because I found the perfect prompt. Not because I transcended into some enlightened “ten tabs of Copilot and vibes” state, although admittedly there has been a fair amount of that too.

The shift came from realising that the problem was not (entirely) prompting.

The problem was workflow.

The polite disaster of one more tweak

My first attempts at building with agents followed what I suspect is now the default industry anti-pattern: one model, one session, keep going until it works.

It feels right at first. It mirrors conversation. You ask for something, the model produces something, you tweak, it adjusts, and there is a satisfying sense of momentum. You can see the system changing in real time. Screens appear. Tests move. Files update. For a while, it feels like speed.

Then, somewhere around turn fifteen, that ages like milk.

The model is not obviously wrong. That would be easier. It is almost right, which is much more dangerous. Fixes land, but slightly off-centre. Behaviour changes in ways that technically make sense but do not match the intent. You find yourself repeating the same instruction with tiny variations, as if phrasing it like a tired primary school teacher might finally unlock the correct branch of reality.

At some point, you realise you are no longer sculpting.

You are negotiating.

Worse, you are negotiating with something that has perfect recall (debatable) of all your previous compromises but no reliable instinct for which of them were temporary scaffolding and which were load-bearing structure.

That is the moment the whole “just keep the session going” model starts to fall apart.

Context is not state. It is sediment.

The breakthrough was understanding context differently.

We often talk about the context window as if it were a clean representation of current state. It is not. It is a historical artefact. It contains what happened, not just what matters. It contains the original idea, the first implementation, the fix, the alternative, the partial revert, the correction to the correction, and that one weird compromise you only accepted because you wanted to see whether the test would pass.

The model then tries to be faithful to all of it.

Which is exactly the problem .

From the outside, this looks like progress. Internally, it is drift. The system has not merely evolved. It has accumulated debt. Every turn leaves residue. Every abandoned branch still echoes. Every “try this instead” becomes another layer of interpretive archaeology.

Systems don’t fail because of a single bad decision. They fail because they quietly accumulate too many of them.

That is not sculpting.

That is sediment.

And once I saw it that way, the fix became obvious, even if it took me longer than I would like to admit to actually behave accordingly.

Stop trying to rescue contaminated context.

Reset it.

Cost made denial expensive

This might have remained a mild irritation if the economics had stayed invisible. Long sessions felt productive, but in hindsight they were mostly productivity theatre - lots of movement, very little forward progress .

Then premium request multipliers in GitHub Copilot were dramatically increased, and suddenly the loop had a price tag that I couldn’t justify.

I had been largely using Claude Opus models as they were generally better and I would use GPT-Codex for lighter, simpler tasks.

But when the multiplier for Claude Opus went from 3x (3 premium requests every time you ask it something) to 15x, I was watching my monthly quota of premium requests disappear faster than cake when I’m stressed!

Every extra turn cost something. Every “nearly there” cost something. Every correction to a correction cost something. The old workflow was not just unreliable. It was expensive.

That forced a better question.

Not “how do I get a better answer from this model?”

Why do I need so many attempts to get a correct one?

That is a different class of question. It moves the focus from individual model performance to system design. It stops treating the model as the whole solution and starts treating it as one component inside a workflow.

That is where things got interesting.

From models to roles

The first mistake was asking one model to do too much.

Think. Plan. Implement. Debug. Refactor. Explain. Remember all constraints. Ignore obsolete context. Preserve architecture. Improve quality. Do not over-improve. Be creative, but not too creative. Fix the issue, but do not touch anything else. Also please infer the thing I meant but did not quite say because I was typing on my phone while making coffee.

That is not a toolchain.

That is a liability with a friendly interface .

So I stopped thinking in terms of “which model is best?” and started thinking in terms of “which model is best for which role?”

The generalist shapes the problem in tandem with the human. The planner produces the implementation plan. The executor implements. The fixer handles bounded corrections. Tests, canaries, and deterministic checks decide what is true.

Each component does less yet the system does more.

That sounds obvious, but it is one of those obvious things that only becomes obvious after you have done the stupid version enough times to develop scar tissue.

Baselines, because helpful is dangerous

Splitting roles helped, but it did not solve everything. Models are aggressively helpful. That is their whole thing. They refactor code you did not ask them to refactor. They introduce dependencies because it looks cleaner. They tidy “while they are there”. They improve abstractions that were intentionally boring. They turn small fixes into minor architectural renovation projects and then look at you with the glowing optimism of a junior consultant who has just discovered design patterns.

That is fantastic when you are exploring.

It is catastrophic when you are trying to build something stable.

So I introduced coding agent baselines.

This is what that looks like in practice:

.github/

├── copilot-instructions.md

└── instructions

├── cloud

│ ├── azure.instructions.md

│ ├── entra.instructions.md

│ ├── gcp.instructions.md

│ ├── microsoft-graph.instructions.md

│ └── netlify.instructions.md

├── core

│ ├── baseline.instructions.md

│ ├── dependency.instructions.md

│ ├── identity.instructions.md

│ └── security.instructions.md

├── languages

│ ├── html.instructions.md

│ ├── javascript.instructions.md

│ ├── powershell.instructions.md

│ ├── python.instructions.md

│ ├── terraform.instructions.md

│ └── typescript.instructions.md

└── platform

├── cicd.instructions.md

├── docker.instructions.md

└── kubernetes.instructions

Not vibes. Not suggestions. Rules.

The core baseline defines the physics of the repository: do not change architecture unless explicitly instructed, do not expand scope, tests define truth, minimise diffs, preserve existing behaviour unless the task says otherwise.

The language baselines define how code should feel in each stack. For TypeScript, JavaScript, Python, HTML, PowerShell, Terraform, Docker and the rest, the point is not to create a style police cosplay department. The point is to remove unnecessary decision-making. Prefer native code over dependencies for small pieces of functionality. Avoid packages entirely for short, simple code where the dependency adds more supply-chain surface than value.

Fewer dependencies does not just mean simpler code, it means fewer places for assumptions to hide.

In security researcher mode, avoid dependencies for exploit code unless there is a strong reason not to, because portable exploit code beats dependency archaeology every time.

The platform baselines do the same at the environment layer. Azure, Entra, Microsoft Graph, Netlify, GCP, Terraform, Docker: each has different traps, defaults, assumptions, authentication patterns, and foot-guns. The baseline keeps those from being rediscovered one hallucinated helper function at a time.

This is not about controlling the model for the sake of control.

It is about removing ambiguity.

And ambiguity is where most wasted turns live.

Prompt architecture: shape before planning

One of the more useful shifts was adding a general-purpose shaping step before the planner.

Not to solve the problem. Not to write the code. Not to produce the final plan.

Just to sharpen the ask.

That sounds like process theatre until you watch what happens without it. A vague prompt becomes a slightly wrong plan. A slightly wrong plan becomes an implementation that is mostly correct in the least helpful way possible. Then you spend the next four turns correcting something that never should have made it into the plan.

Shaping reduces the error surface before the work begins. Which means fewer corrections, fewer retries, and fewer places for drift to take hold.

This became especially obvious in the MCP work I was doing recently. I was moving from individual MCP server analysis into broader toolchain composition: filesystem-like sources, Postgres, potentially SaaS services, then deterministic canary testing across discovered capabilities. That is a lot of room for ambiguity. Even the threat model needed careful handling, because “a source has data” is not automatically the same thing as “the content can command the LLM”. That distinction matters. Without it, everything starts looking like Simon Willison’s lethal trifecta even when it is really closer to malicious insider territory or capability exposure without a demonstrated instruction path.

That is exactly the kind of nuance that gets destroyed by a sloppy prompt.

So the first step became: shape the problem until the planner cannot plausibly misunderstand the mission.

Then plan.

Then execute.

Planning and execution must be separate

This was the next hard rule.

Planning is not implementation. Implementation is not planning. Mixing them is how you end up with a model confidently coding its way around a problem it has not properly understood.

The plan is the contract.

The executor’s job is to follow the contract.

The useful trick here is that the planner and executor should not be the same mental space. If the planner is wrong, fix the plan. If the executor deviates, fix the execution. If the tests fail, reason from the failure.

That sounds boring.

Good.

Boring is underrated. Boring is where repeatability lives.

Choose your models wisely

Not all models are equal. A general purpose model will never be as good at writing code as a coding agent. Similarly, a coding agent is a terrible architect!

My choice is to use ChatGPT as my Rubber Duck in most of what I do, to allow me to think out loud.

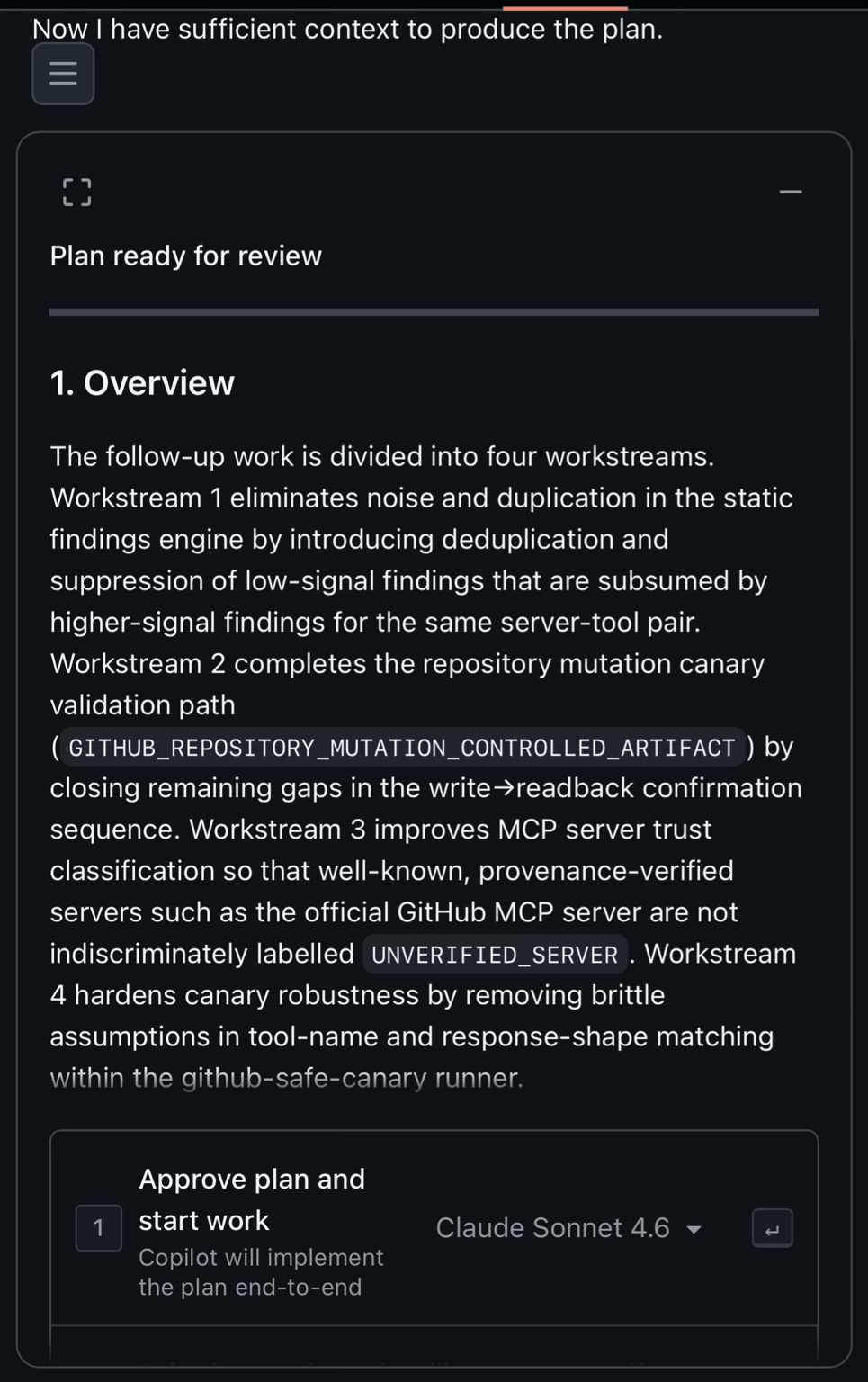

When I have an idea (my own, ChatGPTs, a hybrid), I get ChatGPT to produce a planning prompt for Claude Sonnet (4.6 at time of writing because 4.7 is too expensive).

ChatGPT produces a better prompt for Sonnet than I have the time and patience for - it knows my intent and knows how best to drive Sonnet to produce a plan that the executor will successfully implement.

I review the plan from Sonnet - if need be I can steer it with a follow up prompt but I haven’t had to yet.

Then I change the model on the Approve plan and start work dialog from Claude Sonnet to GPT-5.3-Codex (at time of writing) and approve it to go and do the coding. This has massively reduced wasted turns, improved accuracy and overall efficacy while drastically reducing my token burn/premium request burn rate!

The PR is the unit of intent

The next lesson hurt a little because it runs against the grain of how easy these tools make it to keep going.

“One PR to rule them all” is a bad idea.

Not mildly suboptimal. Bad.

A pull request should not be a bucket for related activity. It should be a container for intent. One PR, one meaningful change, one validation story. If you shove multiple intentions into one PR, you are not saving time. You are forcing several problems to share one context window and one review surface.

That is not a PR.

That is a memory leak with branch protection .

The better loop is simple: plan one thing, implement one thing, validate one thing, merge, reset. The discipline matters more than the cleverness. Especially with agents, because the longer a change lives as a wandering conversation, the more likely it is to absorb accidental requirements and stale assumptions.

The reset is not admin overhead.

The reset is the mechanism that keeps the workflow clean.

The trifecta bug that made it click

This became painfully concrete during the MCP trifecta work.

I was building a view to identify exploit paths across MCP toolchains: read, model context, send. The UI looked right. The grouping made sense. The badges were clean. The conceptual model was solid.

And the view was empty - no exploitable paths.

Which was surprising, because the test data should have shown something.

The issue was not the UI. Codex had implemented that part perfectly well. The issue was the contract. The API was not emitting the trifecta metadata the UI needed. The frontend was faithfully rendering the absence of a thing the backend had failed to provide.

In an old workflow, that could easily have become a wandering session. Tweak the UI. Check the filters. Rework the grouping. Add logging. Question the test data. Sacrifice a rubber duck to the debugging gods.

Instead, the problem was bounded.

The contract is wrong. Fix the API.

One turn. Done.

Not because the model suddenly became better, because the feedback loop became cleaner.

That is the difference.

Composability has teeth

The MCP work also reinforced something I keep coming back to in security: individual capabilities are often less interesting than their composition.

A tool that can read is not automatically catastrophic. A model that can reason over context is not automatically catastrophic. A tool that can send or mutate data is not automatically catastrophic.

Put them together and the question changes.

Now you are no longer asking “what can this tool do?”

You are asking “what can this system achieve?”

That is a much more security-relevant question. It is also why deterministic validation matters. It is not enough to infer that a path might exist. You need to test behaviour. Can the tool actually read the target data? Can the model actually receive and transform it? Can another tool actually send it somewhere meaningful? Does the content influence the model as instruction, or is it merely data present in a source?

That last distinction matters. A lot.

Because otherwise every datastore becomes a panic button and every integration becomes a lethal trifecta by vibes. That is not analysis. That is astrology with JSON schemas .

The right answer can be “complete path”. It can be “partial path”. It can be “capability present, exploitability not demonstrated”. It can also be “inconclusive”.

Inconclusive is not failure - inconclusive is honesty.

And in security, honest uncertainty beats confident nonsense every time .

Deterministic canaries beat vibes

This is where I think the agentic SDLC and security validation start to overlap in a very practical way.

If models are good at generating, grouping, summarising, planning, and implementing, they are also good at creating the illusion that something has been proven when it has merely been described.

So the validation layer cannot be vibes-based.

It needs canaries. It needs explicit probes. It needs deterministic checks that confirm what happened, not what the model thinks probably happened. Especially in MCP-style systems where discovered capabilities can be chained, the difference between “the system contains these tools” and “the system can successfully execute this end-to-end path” is the difference between architecture review and exploitability analysis.

This is why the workflow moved naturally towards deterministic canary testing. Discover capabilities. Classify possible chains. Test the chains. Record what is proven, what is partial, and what remains theoretical.

That is not just better security research.

It is better agentic development.

Because the same principle applies to code: do not trust the generated artefact until the validation layer says it behaves correctly.

The Mythos or Glasswing lesson

Around the same time, there was discussion about systems like Mythos, sometimes referred to as Project Glasswing. The obvious takeaway people gravitate towards is that these systems are powerful.

That is true, but not the interesting part.

The interesting part is why.

It is not just that a better model sits in the middle. Models will keep improving. That is the easy prediction. The more important lesson is that the power comes from scaffolding: the pipeline, the structure, the orchestration, the routing, the memory boundaries, the validation layer, the way work is decomposed and recomposed.

In other words, the system matters more than the component.

That should feel familiar to anyone who has spent time in cloud security. The fragile part is rarely the shiny service in isolation. It is the trust boundary between services. The assumption between layers. The place where one component’s “obvious” behaviour becomes another component’s implicit dependency. Assumptions have teeth. Foundations matter. Apparently even the robots need guardrails and a decent SDLC .

The Agile Agentic Development Life Cycle

At some point, this stopped being a set of personal habits and started looking like a lifecycle.

I have been calling it the Agile Agentic Development Life Cycle, or AADLC, because apparently nothing is real until it has an acronym and a diagram that can frighten a steering committee.

Ideation remains human-led. That matters. The human owns intent, taste, trade-offs, and the judgement call about whether the thing is even worth doing.

Prompt shaping turns messy intent into a clear problem statement. This is where ambiguity gets removed before it metastasises.

Planning produces the contract. Not code. Not cleverness. A plan.

Execution follows the plan with minimal deviation.

Validation decides what is true.

Merge closes the unit of intent.

Reset clears the sediment.

Then the loop begins again.

That final step is the one people are most likely to skip because it feels wasteful. It is not wasteful. It is the point. A clean context window is not a luxury. It is the equivalent of starting the next change from a clean working tree instead of rummaging through yesterday’s debugging leftovers and hoping nothing bites.

The cost paradox

The funny part is that this workflow looks more expensive from the outside.

More steps. More structure. More model handoffs. More up-front discipline.

And yet it costs less.

Because retries are where the cost lives.

The old workflow optimised for immediacy. Ask, build, tweak, tweak, tweak, repair, re-explain, despair, apologise to future you, continue anyway. It felt cheap because each step was small. In aggregate, it was expensive.

The new workflow spends more effort before implementation, but wastes far less effort after it. Better shaping creates better plans. Better plans create cleaner execution. Cleaner execution creates fewer fixes. Better validation prevents imaginary success. Smaller PRs reduce review pain. Context resets prevent drift.

The result is not just lower cost, it is lower friction. And that might matter even more.

Sculpting, properly this time

So yes, I still sculpt code.

But not directly in the way I used to.

The material has changed. I am not primarily sculpting functions, components, handlers, routes, policies, tests, or pipelines. The agents can do a lot of that mechanical work, often faster than I can, and sometimes with fewer typos because apparently my keyboard and I remain in a long-running adversarial relationship.

What I sculpt now is intent, constraints, boundaries and feedback loops.

The model can hold the chisel, but I still decide what shape we are trying to reveal. And if the clay starts remembering too much, I do not argue with it for another hour.

I reset it.

That, more than anything, is the lesson.

We have spent years trying to optimise prompts. Prompts matter, obviously. But prompts are not enough. A good prompt inside a bad workflow still becomes expensive sediment. A decent prompt inside a disciplined workflow can produce reliable results because the system catches the failure modes before they compound.

The biggest improvement was not a model, it was structure.

The biggest cost saving was not (solely) cheaper tokens, it was fewer attempts.

The biggest conceptual shift was not “AI can write code”. We already knew that.

It was this:

Do not ask one model, in one long contaminated context, to think, plan, build, debug, refactor, validate, and remember which of your previous instructions were lies you told temporarily to get past a failing test.

That way lies madness (and invoices).

I did not stop sculpting.

I just stopped letting the clay remember everything .