Hero image generated by ChatGPT

This is a personal blog and all content herein is my personal opinion and not that of my employer.

This is Part 2 of a 3-part series.

| Part | Title |

|---|---|

| Part 1 | The Leak, the Context, and the Framework |

| Part 2 (this post) | Mapping the Trust Boundaries and the Attack Tree |

| Part 3 | Defending Against Runtime Abuse |

Phase 2: Boundary mapping — where trust changes form

When I say “boundary”, I do not mean a vague diagram box with a different pastel shade. I mean a point where one form of authority is transformed into another. Those are the places where design assumptions harden into security outcomes.

At a high level, the architecture looked like this:

That diagram is intentionally boring. Good architecture diagrams usually are. What matters is the interpretation.

Boundary 1: OAuth identity to bridge-issued capability

The most interesting seam in the whole system. A user identity authenticated through OAuth is used to call a bridge endpoint that returns a worker-oriented credential set.

- Why this matters: This is classic identity-to-capability translation. If this fails, you have a confused deputy problem - the AI equivalent of JWT scope creep, where a token meant for “viewing” accidentally grants “executing.” If I had to pick one single place where reportable issues would naturally live, it would be here.

Boundary 2: Worker credential to session ingress token

The code suggested a token used to append logs or interact with a session ingress API - not just metadata for a WebSocket, but a real capability token.

- Why this matters: Possession often equals authority. You stop asking “is this the same as being logged in?” and start asking “what concrete operations does possession of this token allow, and how narrowly is that scope defined?”

Boundary 3: Session token to transport

The moment you have a token feeding an SSE or WebSocket-style channel, you are binding a stateful, ongoing exchange.

- Why this matters: The source implied the transport was not only carrying outputs - it also carried control-plane material. When data and control share a transport, transport integrity matters a great deal more than people sometimes admit.

Boundary 4: Transport to local runtime execution

The runtime appeared able to accept inbound control-related messages that could affect model selection, permission mode, execution state, and interrupts.

- Why this matters: A compromised or confused session is not just a passive transcript problem. It may become an execution steering problem. That is a substantial difference in impact.

Boundary 5: Trusted device augmentation

A layered control added to the bridge/auth flow rather than the primary identity root.

- Why this matters: This introduces fragility. You now have multiple auth-relevant states — user identity, device trust state, session state, transport state, refresh/recovery path - that can drift independently. The source appeared to include logic concerned with stale device tokens and re-enrolment timing. Where developers are already compensating for timing weirdness, researchers should pay attention.

Phase 3: Abuse modelling — how this kind of system breaks

A good abuse model is not the same thing as claiming a live vulnerability exists. The point is to turn architecture into plausible failure classes.

| Class | Impact | Complexity | Description |

|---|---|---|---|

| Credential translation confusion | Critical | Low | Minting credentials for the wrong session or user due to weak ownership checks or refresh path differences. |

| Control-plane abuse | High | Medium | Using the transport layer to alter permission modes or model configuration via injected or replayed messages. |

| State / epoch desync | Medium | High | Exploiting race conditions or epoch mismatches during session recovery — the bugs that make engineers sigh and say “that should never happen.” |

| Capability token misuse | Medium | Low | Replaying stale ingress tokens after a session should have expired; exposure via logs, crash dumps, or browser devtools. |

| Trusted device policy fragility | Medium | Low | Inconsistent enforcement across account switching, re-enrolment, optional versus mandatory paths, and recovery flows. |

The most interesting chain

If I compress the whole thing to a single line, the most interesting path is:

OAuth identity → bridge credential minting → worker credential → session ingress token → transport → control message → runtime state

That is not just a list. It is a sequence of authority transformations.

And each transformation is a point where mistakes can accumulate rather than merely occur.

From boundaries to the tree

We have identified the seams of the system. The attack tree is where we show how they tear. By mapping the five boundaries from Phase 2 into a logical sequence, we can see how an attacker moves from a valid identity to full runtime control.

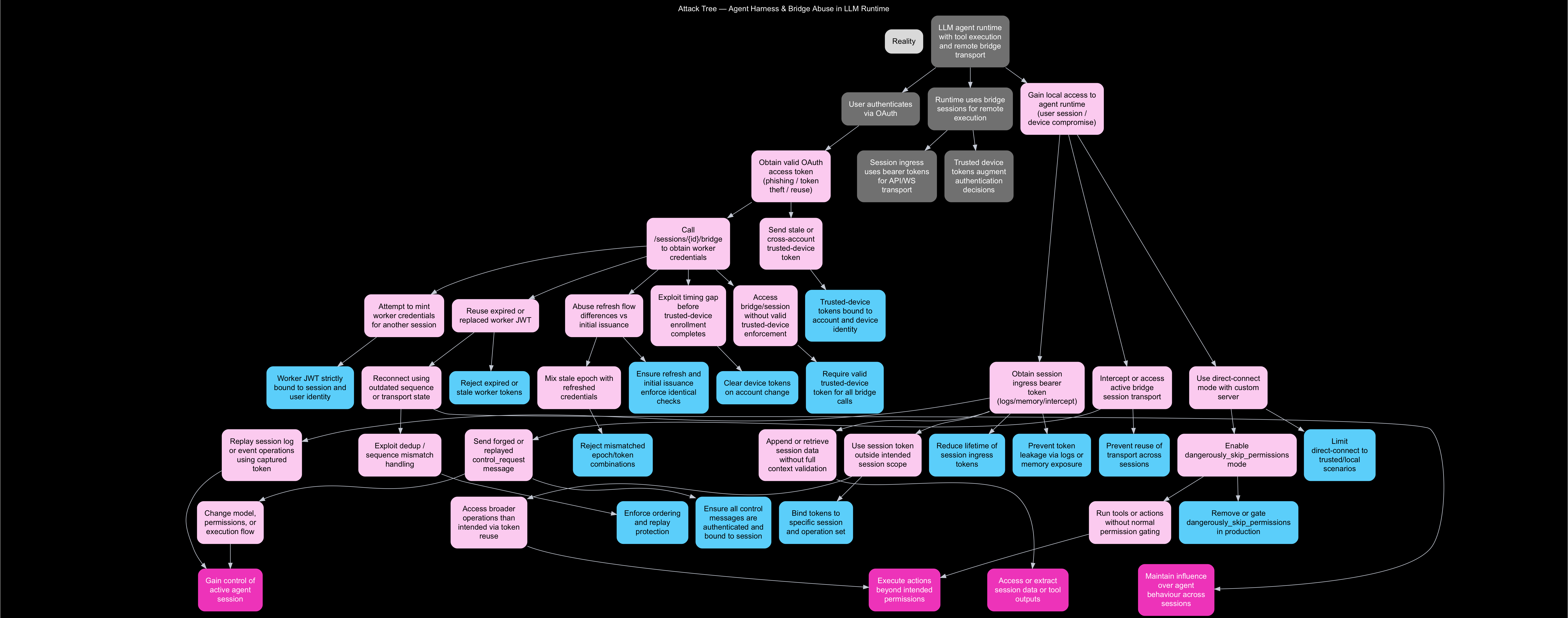

The full attack and mitigation tree — including defensive countermeasures at each node — is shown below:

Attack Tree - Agent Harness & Bridge Abuse in LLM Runtime. Pink nodes are attack steps; blue/teal nodes are mitigations; terminal goals are highlighted in bright pink.

The tree shows four terminal attacker goals: gaining control of the active agent session, executing actions beyond intended permissions, extracting session data or tool outputs, and maintaining persistent influence over agent behaviour across sessions.

For clarity, here is the same tree rendered as an interactive diagram, with mitigations separated out:

The point of this is not that every node is equally likely. The point is that it shows how the system’s core security story is not “prompt in, answer out”. It is a much richer set of relationships between identity, state, capability, transport, and execution.

Why prompt injection is not the whole story

This is where I want to be a little bit quietly rude about the current state of AI security discourse.

A lot of it is still stuck at the level of prompt injection, jailbreaks, or “the model did a naughty completion”. Those are real concerns. They matter. But they also risk becoming the AI equivalent of talking about XSS while ignoring the auth model, session boundaries, and backend privilege graph.

If a system’s runtime can exchange credentials, open remote sessions, move control signals across a transport, and steer local execution, then the security conversation cannot stop at the prompt layer. The prompt is often just one source of untrusted influence. The more consequential questions may be:

- who can mint runtime capability?

- how is it bound?

- how does it persist?

- what can it control once established?

- what drifts during recovery or reconnect?

- what policy layers are optional, stale, or inconsistently enforced?

That is where adult supervision needs to show up.

A more accurate mental model

Traditional applications are often modelled like this:

identity → permission → API

Modern agent systems are better modelled like this:

identity → capability → session → transport → execution → tool → network → state

That is a loop-rich system. Which means the old familiar issues return, but with more opportunities for subtle composition failures: confused deputy, privilege propagation surprises, token replay, session fixation-like behaviours, stale authority after transition, inconsistent mode enforcement, control/data plane confusion.

None of that is science fiction. It is regular systems security. The AI part just distracts people from noticing it.

Continue reading: Part 3 — Defending Against Runtime Abuse

As ever, thanks for reading and feel free to leave comments down below!